Work completed by: Tim West, Sukhpal Shergill & Winston Castle

DESCRIPTION

So far we have been looking into the concept of cloud computing, both public and private, and how this approach supports the development of service based applications (service oriented architecture approach).

Both public and private clouds present their services through an application programming interface which can be used by developers to provision and configure the required infrastructure resources. One of the main issues that has been discussed is that of vendor lock-in, where use of unique cloud API ties the consumer into the cloud service provider.

To address this we will research not only the use of an API to configure the infrastructure but the technique of brokering where the cloud provider API is accessed through a common framework.

This time, Tim, Winston and Sukhpal will work together on the project.

Question

The first part of the project will aim to demonstrate how an infrastructure can be configured to create an elastic cloud infrastructure using the API.

The second part of the project will look at (and hopefully demonstrate) how a broker can be used to control the hosted infrastructure by linking with the providers API.

DETAILS/LOCATION OF PRACTICAL DEMONSTRABLE OUTPUT

For the first part, we looked at using Amazon's EC2 platform along with Auto-Scale to provide an Elastic application environment for a simple web server. Note: currently this functionality is only available in the us-east-1 region of EC2.

Most if not all providers in the Cloud market also provide APIs for using and administering their cloud environments.

The first step is to download the following APIs from aws.amazon.com and install them on either a Linux or windows machine:

- EC2

- Cloud Watch

- Auto Scaling

- Elastic Load Balancing

The next step is to create an AMI of your application. As we have no application to test, we used one of the standard AMIs provided, which included a web server.

Use the following command to create your load balancer:

elb-create-lb MyLoadBalancer --listener "lb-port=80, instance-port=80, protocol=HTTP" --availability-zones us-east-1b

The first argument is the name of the load balancer, followed by the port the load balancer will listen for traffic on and the port to forward traffic to and the protocol, then which availability zones the load balancer will provides instances from. The command will return, displaying the URL of your load balancer.

Next we need to specify how the load balancer will determine which instances are working:

elb-configure-healthchecks MyLoadBalancer --target "HTTP:80/" --interval 30 --timeout 3 --unhealthy-threshold 2 --healthy-threshold 2

The target parameter here states that we send a HTTP request to port 80 and check that we get a page returned. We check with an interval of 30 seconds and that the response has to be either good or bad twice before it's state is changed.

We next start the configuration of Auto-Scale, by defining the instance type and image for the load balancer to start:

as-create-launch-config MyLaunchConfiguration --image-id ami-5ee70037 --instance-type m1.small

Then we created an Auto-Scale group, stating where we create the instances, the min and max size of the group and the name of the load balancer to use:

as-create-auto-scaling-group MyAutoScalingGroup --launch-configuration MyLaunchConfiguration --availability-zones us-east-1b --min-size 2 --max-size 20 --load-balancer MyLoadBalancer

The Auto-scaler will now create a group of two instances and put them behind your load balancer.

We now need to specify how and when the cloud will grow and contract:

as-create-or-replace-trigger MyTrigger --auto-scaling-group MyAutoScalingGroup --namespace "AWS/EC2" --measure CPUUtilization --statistic Average --dimension "AutoScalingGroup=MyAutoScalingGroup" --period 60 --lower-threshold 40 --upper-threshold 80 --lower-breach-increment=-1 --upper-breach-increment=1 --breach-duration=300

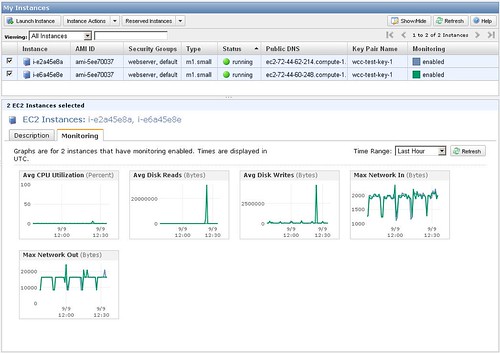

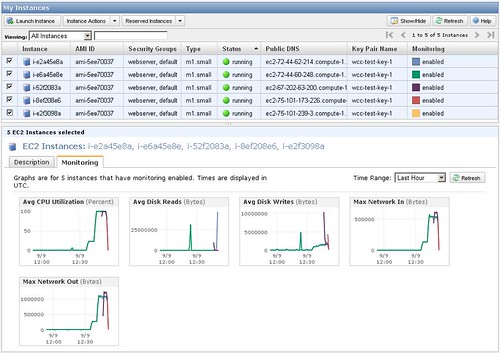

Once the set-up was complete it can be seen from both the AWS Console and through the API that we start with 2 instances with little CPU utilization:

$ elb-describe-instance-health MyLoadBalancer

INSTANCE-ID i-e2f3098a InService

INSTANCE-ID i-e6a45e8e InService

2009-09-09 12:30:00 2.0 0.0 Percent

2009-09-09 12:31:00 2.0 0.22 Percent

2009-09-09 12:32:00 2.0 0.445 Percent

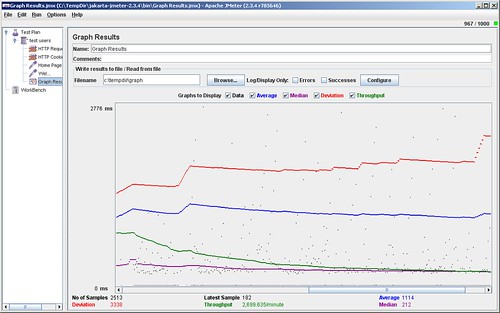

We then started to load the website via a Java tool - JMeter. JMeter is an open source Java based application that allows you to create load on a website by simultaneously hitting it with virtual users. JMeter was configured with 1000 users hitting the website http://myloadbalancer-410033797.us-east-1.elb.amazonaws.com 20 times and then hitting the website http://myloadbalancer-410033797.us-east-1.elb.amazonaws.com/rails/info/properties. Below is a screen-shot of JMeter as it has started running the tests:

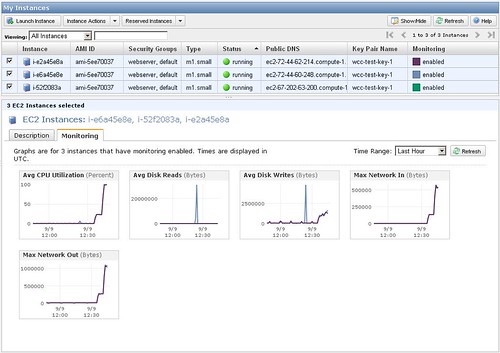

As can be seen after a couple of minutes another instance was automatically added to the load balancer:

$ elb-describe-instance-health MyLoadBalancer

INSTANCE-ID i-b29369da InService

INSTANCE-ID i-e6a45e8e InService

INSTANCE-ID i-e2a45e8a InService

2009-09-09 12:43:00 2.0 99.285 Percent

2009-09-09 12:44:00 2.0 98.57499999999999 Percent

2009-09-09 12:45:00 2.0 100.0 Percent

2009-09-09 12:46:00 2.0 99.285 Percent

2009-09-09 12:47:00 3.0 94.58666666666666 Percent

Then another was added a couple of minutes later:

$ elb-describe-instance-health MyLoadBalancer

INSTANCE-ID i-b29369da InService

INSTANCE-ID i-a8906ac0 InService

INSTANCE-ID i-e6a45e8e InService

INSTANCE-ID i-e2a45e8a InService

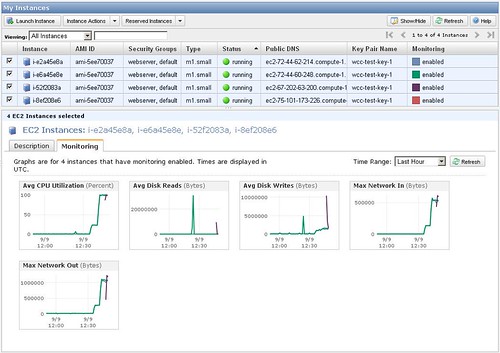

Then another:

$ elb-describe-instance-health MyLoadBalancer

INSTANCE-ID i-e2f3098a InService

INSTANCE-ID i-8ef208e6 InService

INSTANCE-ID i-52f2083a InService

INSTANCE-ID i-e6a45e8e InService

INSTANCE-ID i-e2a45e8a InService

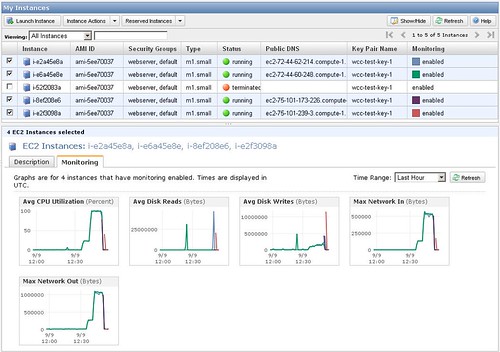

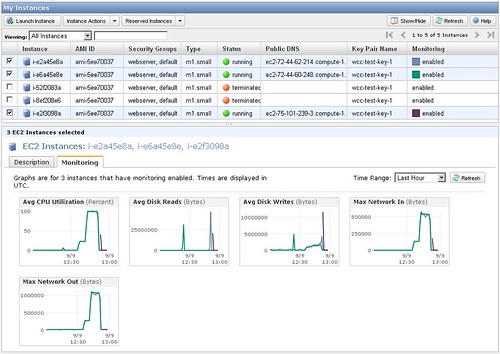

Then once the JMeter had finished running then the load drops and the instances are slowly removed:

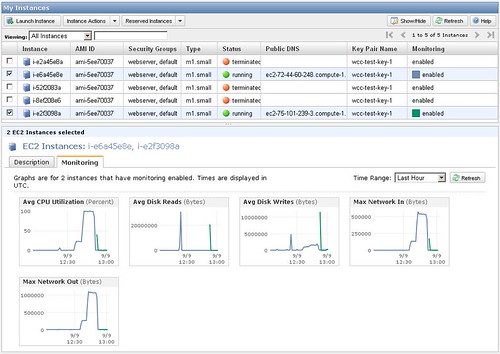

Until we are back to just running two instances:

For the second part, we looked at www.cloudkick.com as a frontend for multiple Cloud providers. They currently support Amazon EC2, Rackspace and Slicehost. Slicehost is now owned by Rackspace. This service is currently free, with more advanced functionality planned but this will be chargeable.

As we already have an Amazon account we configured Cloudkick with our account details and all our servers appeared within the Cloudkick web interface.

From this web interface you can find basic information about each of the instances, set up very basic monitoring of those instances, view graphs of the monitored parameters, start a terminal session to the instances, and reboot or destroy an instance. You can also create a new instance, provided you want an Ubuntu 8.10 instance located within the us-east-1 region.

Currently this service is very basic and does not offer any functionality not already available through the Amazon AWS Console. The service would be of little or no benefit unless you were already using multiple Cloud providers. This feature set may be different for the Rackspace Cloud environments.

Cloudkick have written an API called libcloud, which provides a pure python client library for interacting with many of the popular cloud providers. libcloud is an independent free software project licensed under the Apache License (2.0). There are also similar offering from Redhat's deltacloud and OpenNebula.

PROJECT OUTCOME

Using the Amazon API to set-up an elastic website, was relatively easy and we had it working within a day. So once you have your application written and your AMI produced with it already installed, you can have your website up and running very quickly. None of the functionality shown above is available from Amazons AWS Console, so you have to use the API to create this set-up.

Using the Cloudkick service to administer your cloud infrastructure was also easy, but the lack of more than very basic functionality makes this service currently not worth using.

SHORT TERM BENEFITS

As we currently don't use any Cloud services, the use of these APIs have no use.

STRATEGIC IMPLICATIONS

If the services like Cloudkick become more feature rich, to include most, if not all of the features from the Cloud providers, then the use of these services will help to abstract the day to day management from the technicalities of each providers API. This will also help, should we need to move from one provider to another or to use services from one provider not offered from the others, without needing to move our whole infrastructure to a new provider.

OTHER NOTES ETC

The following link is to Apache JMeter that was used to load test the web site:

http://jakarta.apache.org/jmeter/